The six fields where artificial intelligence (AI) will offer added value in customer experience

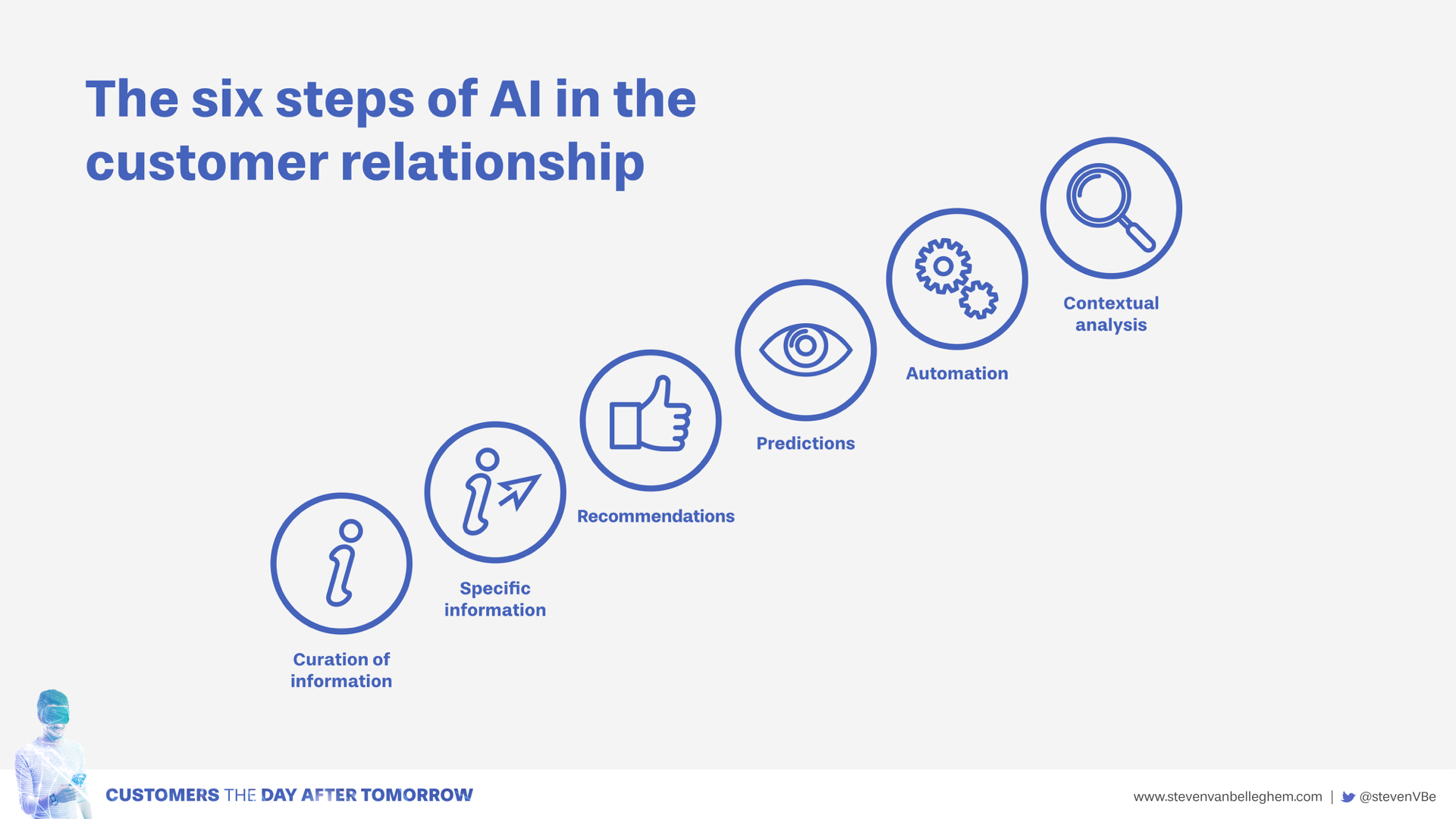

Six steps where AI can influence customer experience

Today (2018), we entrust all kinds of simple tasks to our virtual assistant, from setting our morning alarm call to timing how long it takes to boil an egg. Of course, this is little more than playing about. Even so, it is the start of the evolution in AI that will soon see companies offering significant added value to their customers. This will happen in six steps, each of which will result in ever-greater AI impact.

The six steps in the AI customer relationship:

1. Curation of information

2. Provision of customized information

3. Recommendations

4. Predictions

5. Automation

6. Contextual analysis

1. Curation of information

The current Google interface was developed in the previous century. For each search task we get dozens of pages with hundreds of links. Of course, nobody bothers to search through all those pages. We choose a link from the first three options and that’s it. Information overload is something that we all try to avoid. True, in recent years a number of innovations have been introduced to improve the quality of the search engine. Google now corrects our spelling mistakes. And if we are typing in our search task, Google offers us a range of options after the first few letters. In fact, it sometimes seems as though Google knows what we are looking for before we know it ourselves. Yet for all these clever innovations, it is still essentially an old interface that gives its visitors far too much irrelevant information.

Artificial intelligence helps to curate content. Once again, Facebook is an excellent example. Instead of us making the choice, computers make the choice of what we get to see and what is blocked out. For retail platforms this is a major opportunity. On sites like Zalando you currently get offered page after page of clothes, most of which you wouldn’t wear in a thousand years. A really good AI interface will only select clothes that it knows match your taste. With data being produced in every increasing quantities, information overload looks like being a problem for years to come. AI can help you to select the correct and most relevant content for your customers.

2. Provision of customized information

A smartphone that suddenly lets you know it’s time for you to set off for your next meeting, taking account of the current situation on the roads, is a good example of how AI can provide customized information The computer uses different data sources to offer content that is tailored to your personal needs.

Concrete questions can now be answered almost instantly by smart interfaces. That’s how Google Home works. Recently, my children asked me about the colours of the rainbow. In all honesty, I hadn’t a clue. ‘Dad, you’re useless! Google, what are the seven colours of the rainbow, please?’ Within seconds, Google Home was ready with the answer: ‘The seven colours of the rainbow are red, orange, yellow, green, blue, indigo and violet’. Nowadays, these kinds of relatively simple question are being answered with increasing frequency by talking machines. In the future, much more complex questions will also be possible. Before long, our virtual assistant will become our main source of help for all our daily queries and problems. AI provides specific responses to specific requests.

This can be a fantastic application for governments. Many thousands of citizens have concrete questions about taxes, subsidies, hours of opening, and a hundred and one other things. Wouldn’t it be great if you could ask a concrete question to an administrative service and get concrete (and correct) answer straight away? As consumers, we would no longer need to waste time searching for the information we need. It will be handed to us on a plate, with minimal delay.

3. Recommendations

Recommending has already been part of most e-commerce sites for a number of years. In the years ahead, the quality of these recommendations will improve. The more data that is available about an individual user, the more targeted the resulting recommendations will be. This is set to play a huge role in the financial sector. Blackrock is the largest asset management player in the world. Since March 2017, they have been getting recommendations from their AI interface about the buying and selling of shares. The computer processes all available data and comes up with well-founded suggestions about what the company should do.

4. Predictions

Content curation, customized information and recommendations are already part and parcel of all our daily lives. The growth of AI will lead to quality improvements in all these fields. At present, the fourth step – predictions – is less familiar.

Prediction is at the very heart of artificial intelligence. Self-driving cars need to predict what other drivers are going to do, so that they can make the right decisions. A bank wants to know in advance if a customer is likely to repay his/her loan. As the quality of AI predictions increases, the products that are dependent on the predictions will fall in price. The agricultural industry, mobility and health care are three sectors that can benefit hugely in this manner from accurate predictions. Decisions will be taken more quickly, fewer mistakes will be made and the quality of the end product will improve correspondingly.

The same process will also be seen at company level. Looking at the elements in your business process that can benefit from more accurate predictions will become a standard part of most companies’ AI strategy. Scriptbook is a Belgian company that works for several of the major studios in Hollywood. The studios send the first draft of a film script to Scriptbook. At the time, it is not yet known which actors and actresses will be playing the different roles. Even so, this input, a raw script, is enough for Scriptbook to predict the likely box office takings of the resulting film. True, it is not an exact science, but it helps the studios to better estimate their risks and so take better decisions. Every company has activities that can benefit from accurate predictions.

5. Automation

The next step leads us towards an automated world. In a first phase, many clearly defined steps in the customer journey will be mechanized. Chatbots can take over various aspects of customer service. Self-driving vehicles can take over numerous transport functions. The basic output of the accounts department, the writing of simple texts and instructions, the settlement of routine legal matters: these are all further examples of areas where automation can play a major role.

The second phase involves the full automation of many aspects of our daily lives. Our cars will drive us, instead of us driving them. Our basic daily necessities (washing powder, coffee, corn flakes, etc.) will be ordered and delivered automatically, on the basis of input from a machine. Robots will help more around the house. They will also help in care homes and a thousand and one other places. It is not yet clear when we will reach this phase. Pieter Abbeel, a Belgian professor in UC Berkeley, is one of the top five scientists in the field of artificial intelligence and robotics. Berkeley is also currently the best university in the world in this area of expertise. According to Pieter, the AI evolution will start slowly but then accelerate rapidly. “At a certain moment, we will be confronted with serious job losses in many sectors. It is difficult to say when this critical point will arrive, but I believe it will be sooner than everyone thinks.”

In an automated world, the sale of a product can happen completely autonomously. We are moving from e-commerce to a-commerce: automated commerce. On the basis of its sensor data, the coffee machine knows when your supply of coffee is almost exhausted. Consequently, it will independently place an order with your local e-commerce supplier. In the supplier’s warehouse, self-driving vehicles will prepare your order with equal independence. And it will be delivered to your home by a driverless lorry. A small self-propelled robot will take the delivery to your front door – and, after that, the rest is up to you! It sounds futuristic? Possibly. But the building blocks are already in place to make this happen. Amazon is currently working on sensors for household appliances (like your coffee machine). Mercedes is developing self-driving lorries with drones and robots that can make deliveries. They both plan to launch their innovations by 2020…

6. Contextual analysis

In this final phase, AI will be able to perfectly understand the context of the consumer. When we reach that point, Netflix recommendations will be able to take account of the current mood of its viewers. If you are down in the dumps, it will suggest something to cheer you up. At the moment, it can only take account of your past viewing preferences and that of similar customers. Once the context can be analyzed properly, the level of the recommendations – and the level of automation -will improve dramatically.

Imagine you have just bought a new house. You are probably in seventh heaven. At a moment like that, you want to listen to music that is different from what you usually listen to. Something special. Something celebratory. To make this possible, the AI interface will not only search for data in a well-defined silo, but will scan all available data. The complexity of such an analysis is huge. Today, an algorithm is asked to make recommendations on the basis of a well-defined task in a specific set of data. In the world of contextual analysis, the AI interface will need to trawl through all – I repeat, all – available data to reach its conclusion. The computing power that this requires is almost unimaginable.

You can best compare it with the way people converse with each other. At the moment, this is a form of empathy that is only possible between people. People have the ability to take account of all the different parameters when they talk to someone else. For example, they can see whether you are feeling happy or feeling sad. The day that a computer or robot is capable of making the same analysis, that will be the day when one of the most important distinctions between humans and machines falls away.